Chris21 import unexpectedly hangs

A chris21 DET import has hung, seemingly forever. Is there some way to find out what caused it to hang? Could we have a timeout added so it automatically recovers. Right now there's no way to find out there's an issue until the customer rings to ask why processing has stopped.

Answer

Exactly this same behaviour happened again yesterday at 15:00 UTC, so could you please escalate this up as an urgent Bug rather than a Question?

The customer is planning a demonstration of this proof-of-concept environment to their management on Friday, so it needs to be working 100% before then or else the concept will not be proven :-)

Hi Adrian

The chris21 agent does have a timeout, which yours appears to be set to 1 hour. This is a per request timeout, not the whole import, so I'd recommend reducing this. The bulk of the requests made during a full import will be for chunks of 1000 (as set on your connector) users, so choose an appropriate timeout for requests of this size in your environment.

There's nowhere that suppresses timeout errors, so if one occurs, the import should immediately fail.

Thanks Beau. I've set my timeout to 10 minutes instead of one hour.

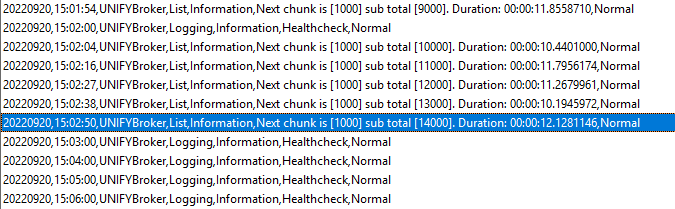

In the UNIFYBroker log (private attachment above) I see the following chunking messages (each chunk being a request):

After that, there are no chunk log entries recorded until the service is forcibly restarted. So the Chris21 timeout does not appear to have worked correctly - hours after the last request started the connector import is still running, and Cancel Import does not stop it from running. The connector normally returns about 20,000 entities. Are you able to make it so that the timeout works?

The timeout is part of the underlying web request framework so its not something we control, other than setting it. It definitely look to be getting set, though.

I did identify a possible threading issue with the chunking requests code, which would have the same symptoms as a non-functional timeout. I've provided a patch to David.

Awesome thanks. I'll get David to install it after the customer's internal demo on Friday, so we can try it out.

Hi Adrian,

Were you able to test out the patch that Beau provided by any chance?

Hi Matt,

The customer changed the demo to be non-live (screen snapshots) and since this was only a POC they said not to worry about it.

Since the problem appeared to be related to chris21 API response timeouts when there is heavy filtering on the service side that is filtering out >99% of records, this is unlikely to be an issue if/when the customer comes back to us and goes live, as we'll have access to all their data then, not just a handful or records filtered out of tens of thousands.

So, the patch is untested I'm afraid.

No worries - thanks for the confirmation. I'll leave the ticket open for now as we'll target testing the threading issue that Beau identified, and roll any fix we do find into the 6.0 release.

This has been implemented and is available in the release of UNIFYConnect Module for Chris21, which will be made available shortly.

Customer support service by UserEcho

This has been implemented and is available in the release of UNIFYConnect Module for Chris21, which will be made available shortly.